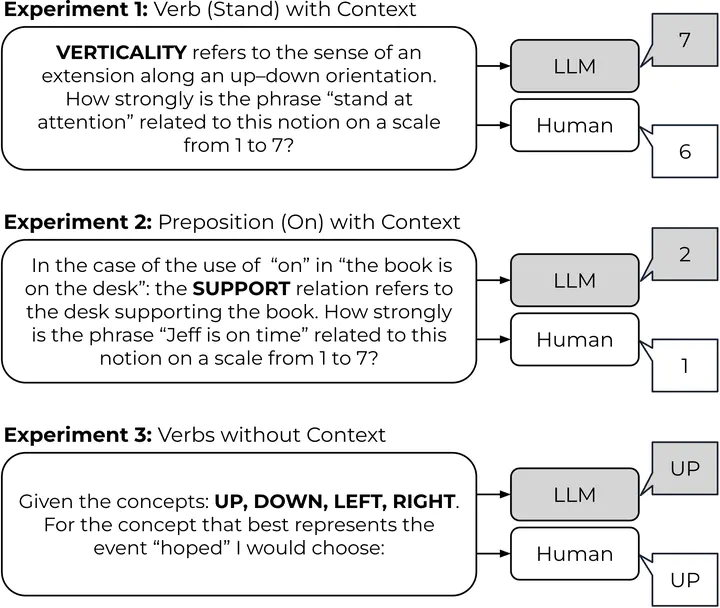

Overview of psycholinguistic experiments repeated with LLMs

Overview of psycholinguistic experiments repeated with LLMs

Abstract

Demonstrating exceptional versatility in diverse NLP tasks, large language models exhibit notable proficiency without task-specific fine-tuning. Despite this adaptability, the question of embodiment in LLMs remains underexplored, distinguishing them from embodied systems in robotics where sensory perception directly informs physical action. Our investigation navigates the intriguing terrain of whether LLMs, despite their non-embodied nature, effectively capture implicit human intuitions about fundamental, spatial building blocks of language. We employ insights from spatial cognitive foundations developed through early sensorimotor experiences, guiding our exploration through the reproduction of three psycholinguistic experiments. Surprisingly, correlations between model outputs and human responses emerge, revealing adaptability without a tangible connection to embodied experiences. Notable distinctions include polarized language model responses and reduced correlations in vision language models. This research contributes to a nuanced understanding of the interplay between language, sensory experiences, and cognition in cutting-edge language models.